Here’s a scenario: You are leaving to meet your friend Mark and you want to tell Alexa this:

- You: “Alexa, let Mark know when I am almost there”

- Alexa: “Okay”

To solve this you need to answer:

- Which Mark is relevant now? and btw, is it Marc or Mark?

- Where is “there“?

- Where is Mark – or where is he supposed to be in the near future?

- Where am I?

- How fast am I approaching Mark? (to decide what almost means)

- How do I communicate with Mark? Text? Call? (or the brand new “echo calling”)?

Then a system that executes this as a delayed one-time action and automatically shares my location on his Google Map (till we meet).

Scenario 2: You don’t want to come home to a dark house.

- You: “Alexa, turn the kitchen lights on when I come home at night”

- Alexa: “Okay”

To solve you need:

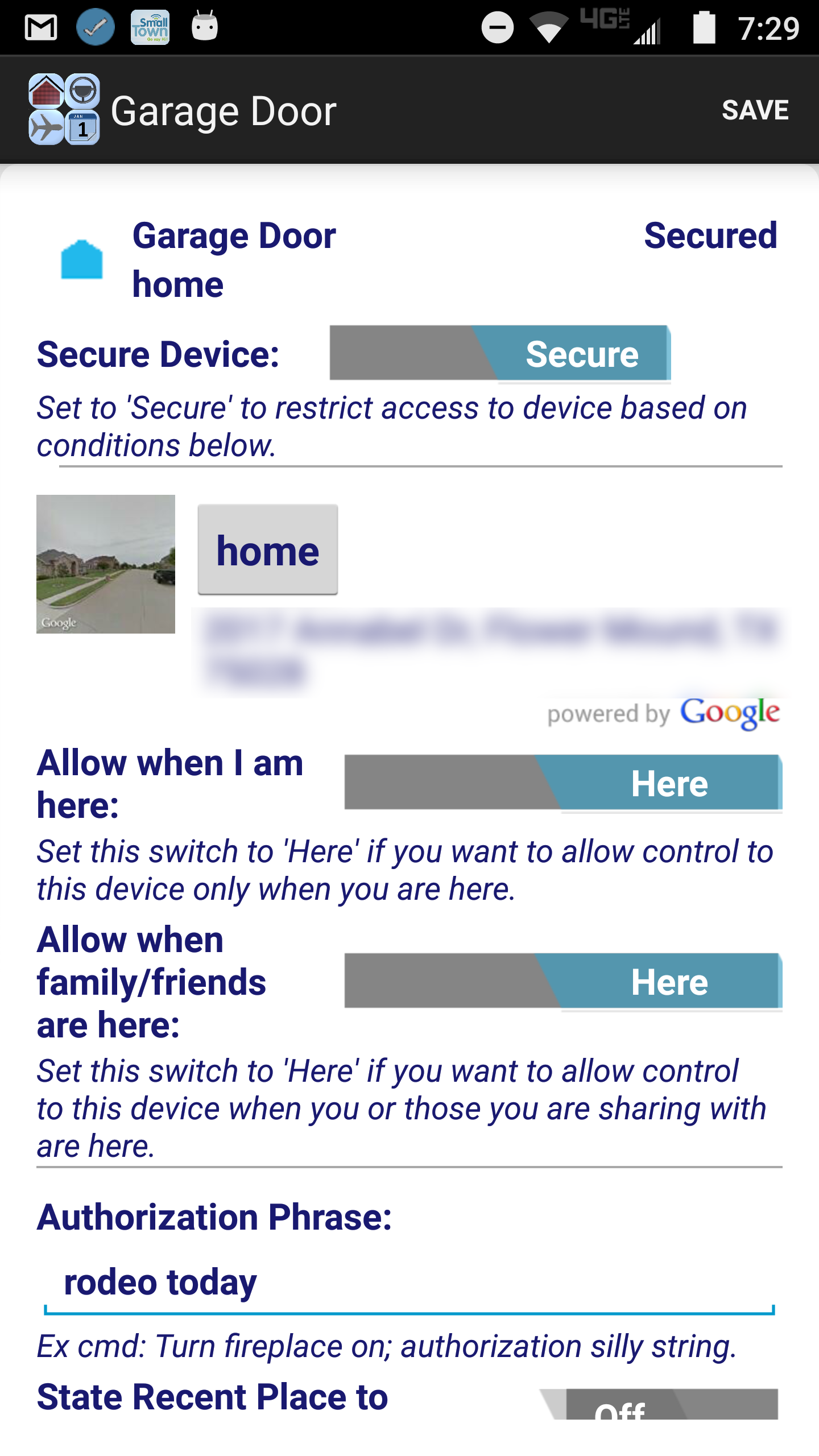

- Where is home?

- Where am I?

- Is it dark (when I am almost home)? and is that what I mean when I say “at night“?

- Am I going home?

- How do I communicate with the system that controls the kitchen lights?

- When do I turn them on? (not a big deal for kitchen lights but could be for slow to brighten outdoor lights or the HVAC)

- Save and execute as a persistent action (“when” is actually whenever).

Scenario 3: You are cutting a not-yet-fully-thawed full chicken for dinner and the in-laws have arrived!

- You: “Alexa, unlock the side door”

- Alexa: “You ordered pizza for dinner earlier this week. Can you tell me where you ordered from?”

- You: “Enzo’s”

- Alexa: “Side door is unlocked”

All of this is contextual intelligence and it is essential for natural language processing to sound natural. Check our concept integration as an Alexa skill: idid-inc.com/my-avatar